OpenCV Pour Processing est une librairie open-source permettant de l'analyse et de la reconnaissance basique à l'intérieur de l'environment de création, Processing. La bibliothèque simplifie l'intégration d'une librairie puissante pour la reconnaissance optique, OpenCV.

- Software: OpenCV Library

- platforms: Mac OS X | Linux | Windows

- Design & Development: Stéphane Cousot, Douglas Edric Stanley

- Release date: July, 2008

- Development platform: Processing

OpenCV is an open-source library for integrating basic computer vision analysis and tracking within the Processing environment. It simplifies access to the powerful OpenCV library and offers a Java/JNI wrapper for artists, designers and multimedia developers looking to integrate OpenCV into their project.

Stéphane Cousot and I are announcing today the public availability of our OpenCV Library for Processing. Although the library has been ready (in various states of undress) for a few months now, we have been using the intervening time to learn more in-depth how OpenCV works, debug, simplify method calls, test the library in real-world situations, add various features, plan out features for future releases, and -- most importantly -- write coherent documentation for those Processing users discovering OpenCV for the first time. It might seem like a light start, given the limited number of functions we've made available from the impressive Intel library, but we wanted to make sure each component worked as promised. Also, we wanted to make working with it as painless as possible for Processing users, and follow the Processing logic of getting complex things done with a limited number of simple methods. And finally, we wanted to make sure it was stable enough in a real-world installation context.

Download link: here

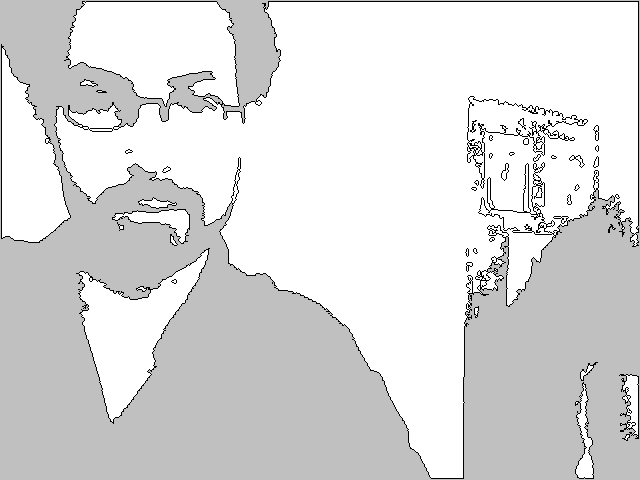

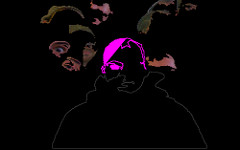

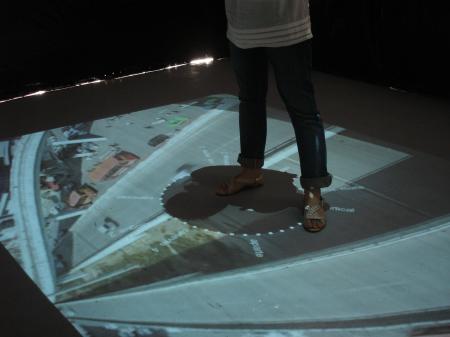

For the features, you have internal (via OpenCV) and external (via Processing) capture, basic image treatment (threshold, comparison, extraction, etc), contour tracking, face & body tracking, and a few other little goodies thrown in here and there. So, as it stands, you can (for example), recognize someone's face, grab the outline of that face, and go into the image data of that person's face to extract the face data. Or, you could use infrared filters with lights pointed at or placed on your body (see below), a multi-touch surface, or some other artificial lighting condition to grab light blobs for finger or body-part tracking and use that data somehow in Processing. There are obviously many possibilities.

Some of the things you cannot yet do, and which we plan to add to the library: motion history images and optical flow (pixel tracking), kalman predictions, color tracking, histograms, and obviously the list could go on and on. A lot of these functions I already have working in OpenFrameworks for an installation (soon to be announced) which will be exhibited later this summer. So consider the current release a starting point, with what we believe is a fairly clean start, but we could be wrong on that. The code is open, so go in and dig around -- perhaps you can give us some good advice or add to the code yourself.

Special note: this library will also work for pure Java work, and yes, there is Java documentation.

So, why did it take so long? Well... when I say that we've been busy testing it in laboratory and real-world instances, I mean it. I've gotten some mail on this recently, so I should make things a little clearer: if you ever wondered why I don't post as much as I (or apparently some of you) would like, it's because I'm busy elsewhere working on so many @#&*$% projects. I do not just work on my own projects and I am definitely not a full-time blogger : I teach, run an atelier, collaborate with other artists, do research, write, write code, consult, curate, and somewhere in there, I'm a dad for two lovely and brilliant young (or youngish) women. Since I don't have a secretary, nor a double, that means some creative Douglas-time-sharing. So when I'm quiet here, it most certainly means that I'm busy doing one of these other things. And over the past few months, that has worked out to about 50% of my creative work involving OpenCV in Processing site:processing and OpenFrameworks.

And on Stéphane's side, he's been just as busy working over the past six months on a gazillion projects for various artists, art students, and researchers; and only a part of that work involved this OpenCV library.

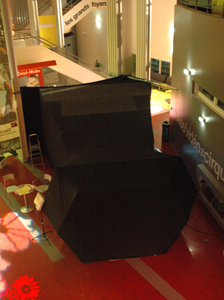

So, what have we been doing with it? The library has already been used in numerous projects at the Atelier Hypermédia, in external workshops at schools such as the Institut d'Arts Visuels in Orléans, as a research tool at the DRII laboratory (Dispositifs relationnels : Installations Interactives) at the École Nationale Supérieure d'Arts Décoratifs in Paris, and in two public works, one an installation for Gamerz 0.2 and the other as a component of a haptic dance performance-dispositif by Wolf Ka and his studio. Finally, we used the library to prototype an urban-design project by Lei Zhao for the Studio Lentigo, Marseille although this project was eventually finished in OpenFrameworks due to the high video performance demands of the installation. So all in all, about a dozen different projects over the past few months.

Here are a few images/videos with links for more information on the author(s)/works:

- Wolf Ka + cie, Moving By Numbers (see link for video).

- Atelier Hypermédia, C'est toi la patate.

- Lei Zhao, Node City (follow link for more videos).

- Fabien Artal, Diplôme DNSEP (avec les félicitations du jury), L'école supérieure d'Aix-en-Provence. There is a video, but you'll have to jump to 23:15 for Fabien's installation.

- Students of the Institut d'Arts Visuels, Workshop Légerté + Nuit des musées, Orléans (follow this link for -- very poor quality -- video).

- I'll leave off with these images from an installation Stefan Schwabe created with his collaborator Sebastian Neitsch in a public pool in Halle. As swimmers wade about, their movements are tracked by a camera and modify an image built out of 4 overlapping projectors, projecting onto the dome of the rotunda. It should be mentioned that, like Lei Zhao's Node City, this piece used Processing only during the prototyping phase (the final work was created in vvvv). Nevertheless, Stefan & Sebastian's project was an important one in our year-long experimentation with various forms of video surveillance in art and design installations. (See Stefan's website for video of this installation).

Update: I used the wrong terminology. Oops. We decided to call this version v01, precisely to suggest that there is still much progress to be made. Previously I called it v1.0, which is a very different idea!